Blog

Insights into how the best teams ship faster without sacrificing the experiences their customers depend on

How MCP and AI are changing Enterprise QA in 2026

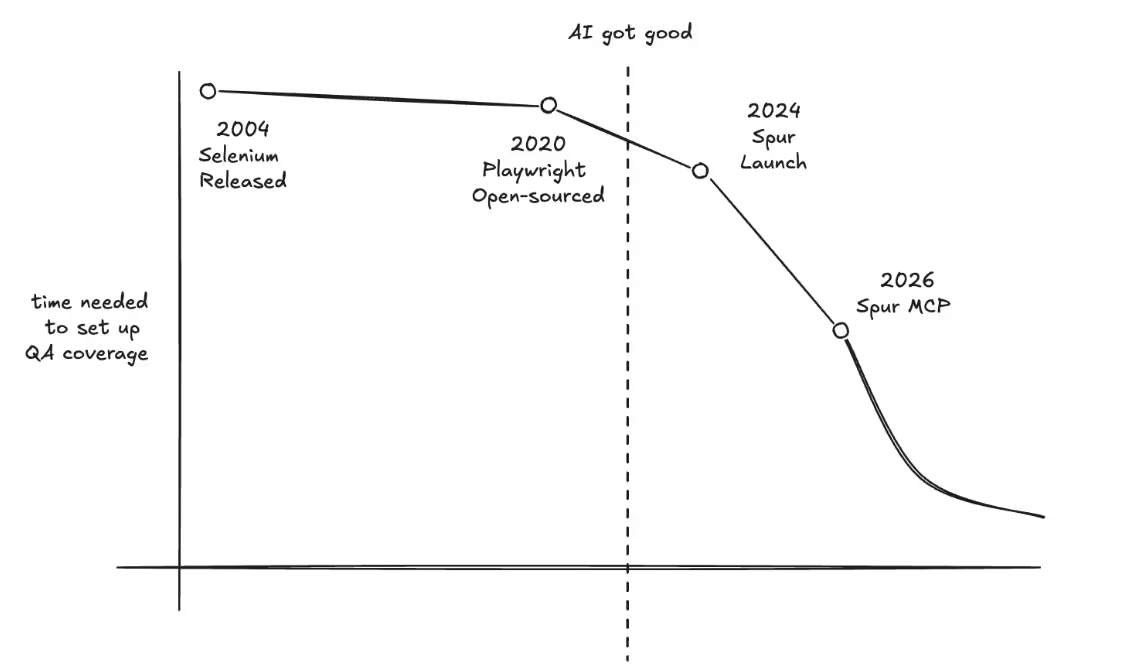

Throughout the history of automated QA testing, there have been two big inflection points that drove great leaps in QA productivity. Today, we introduce the third.

The first was the introduction of ergonomic testing script languages, such as Playwright. QAs were able to automate tens of flows, test stable pages with scripts that would run through selectors. Teams started to pick up processes around this – Test driven development, requiring tests with every feature.

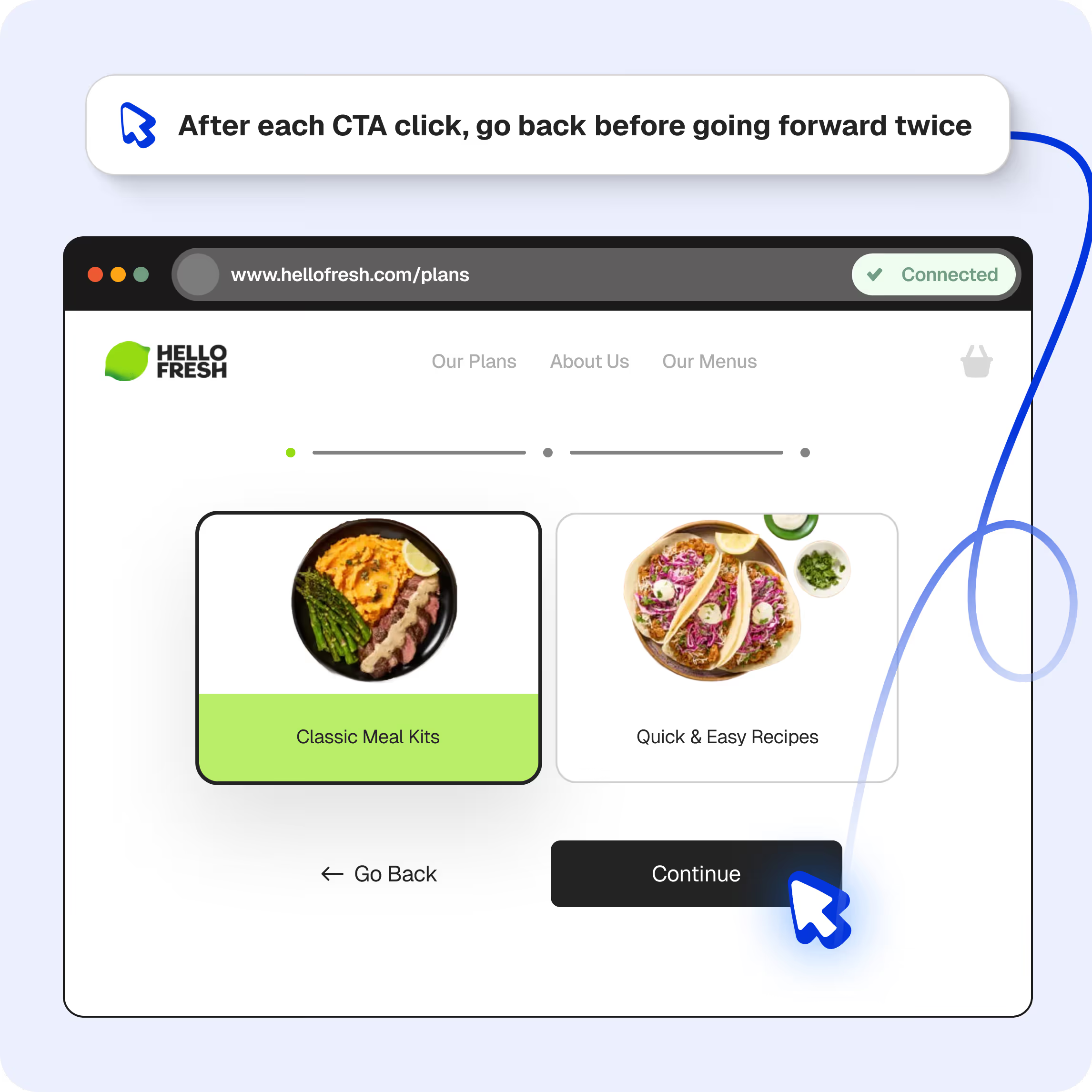

The second unlock was the advent of agents and LLMs. With each new SOTA model, the need for brittle selectors and script syntax became less relevant. With products such as Spur, automated QA has moved out of the realm of niche technical knowledge and into pure systems-thinking. QA stopped being limited by a mastery of automation languages.

The QA that uses Spur now spends their time thinking about what needs to be tested, and manages a swarm of agents to surface new insights.

Today, we are proud to launch the third jump in QA productivity with the release of our MCP. We’ve greatly expanded Spur’s agentic capabilities. AI can now handle the entire testing loop, and now the only thing a QA needs is intent, while Spur handles all the busy work.

What is an MCP?

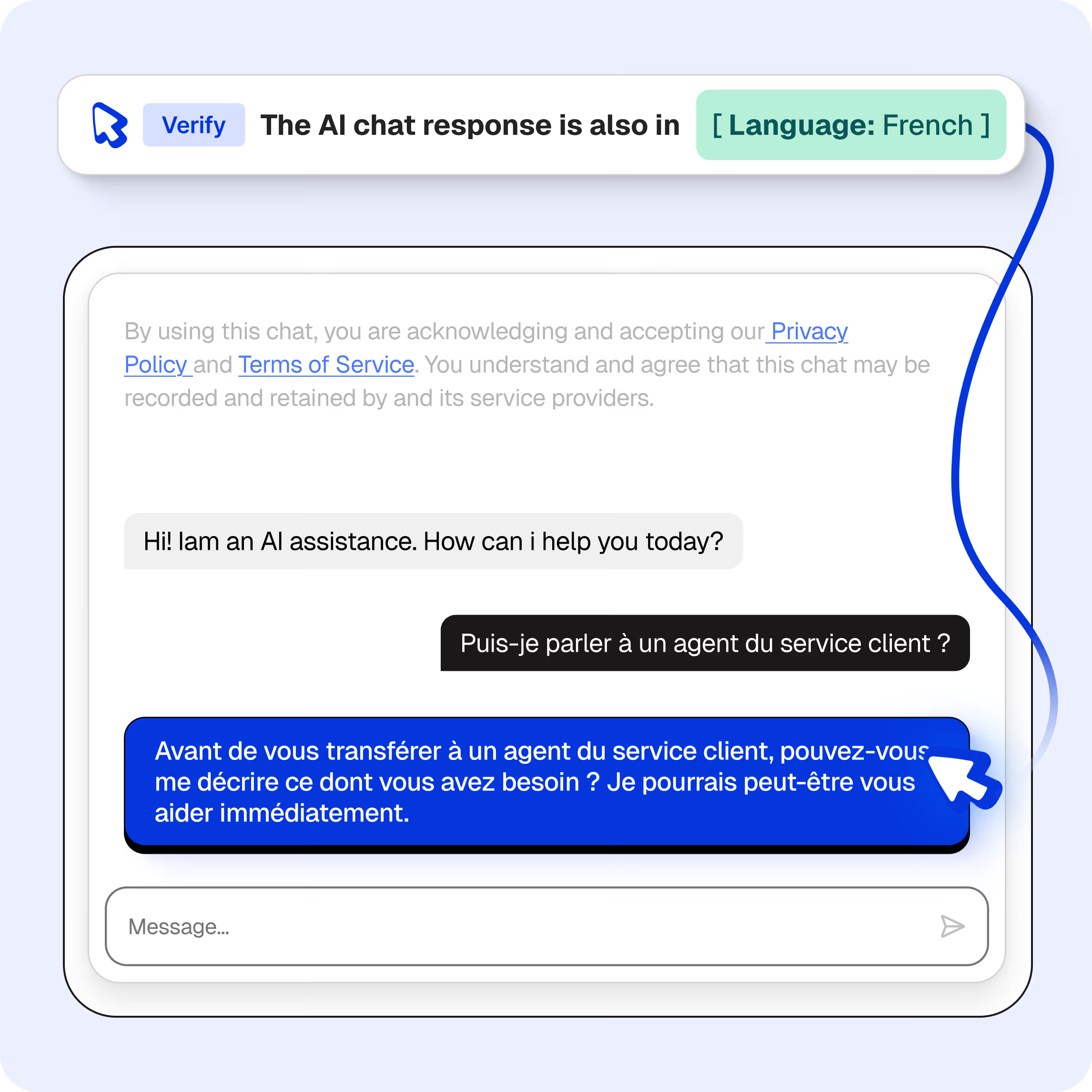

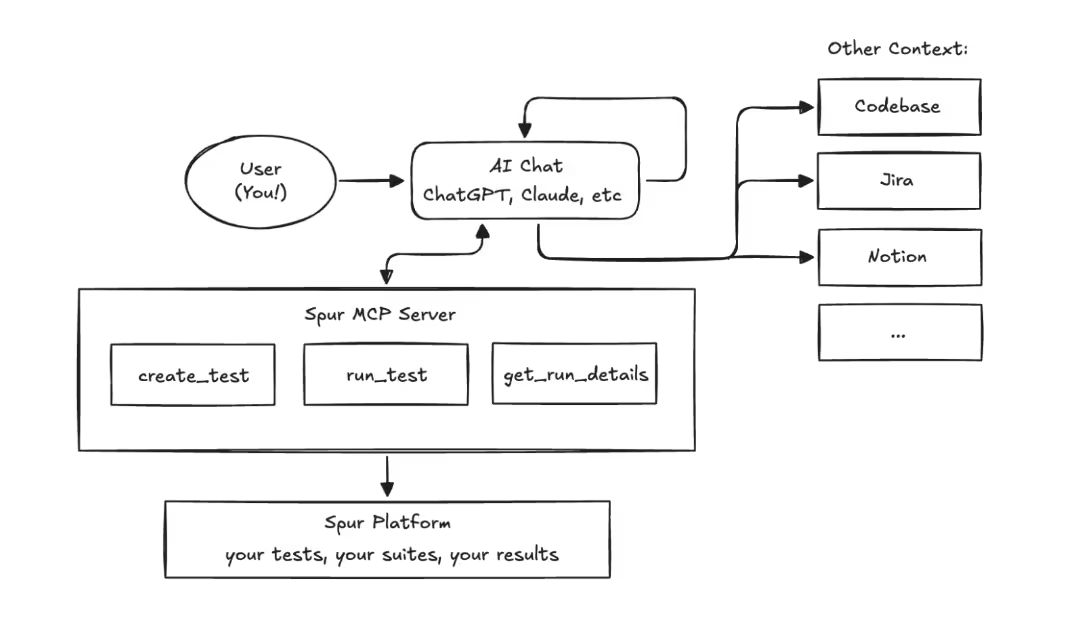

An MCP Server is a protocol initially described and published by Anthropic, and has since become the primary way AI can use third-party features. The Spur MCP allows your AI chats or agents (ChatGPT conversations, Claude agents, Copilot chats, …) to use Spur itself – writing tests, running them, even analyzing them. We’ve built parity to almost every feature on our application, so your AI agents have the full capability to operate your testing.

A note about AI hallucinations: The Spur MCP server gets used by your AI chats, so improper outputs from the model are not under our control. However, we have introduced several safeguards, as well as getting approval for any potentially destructive action.

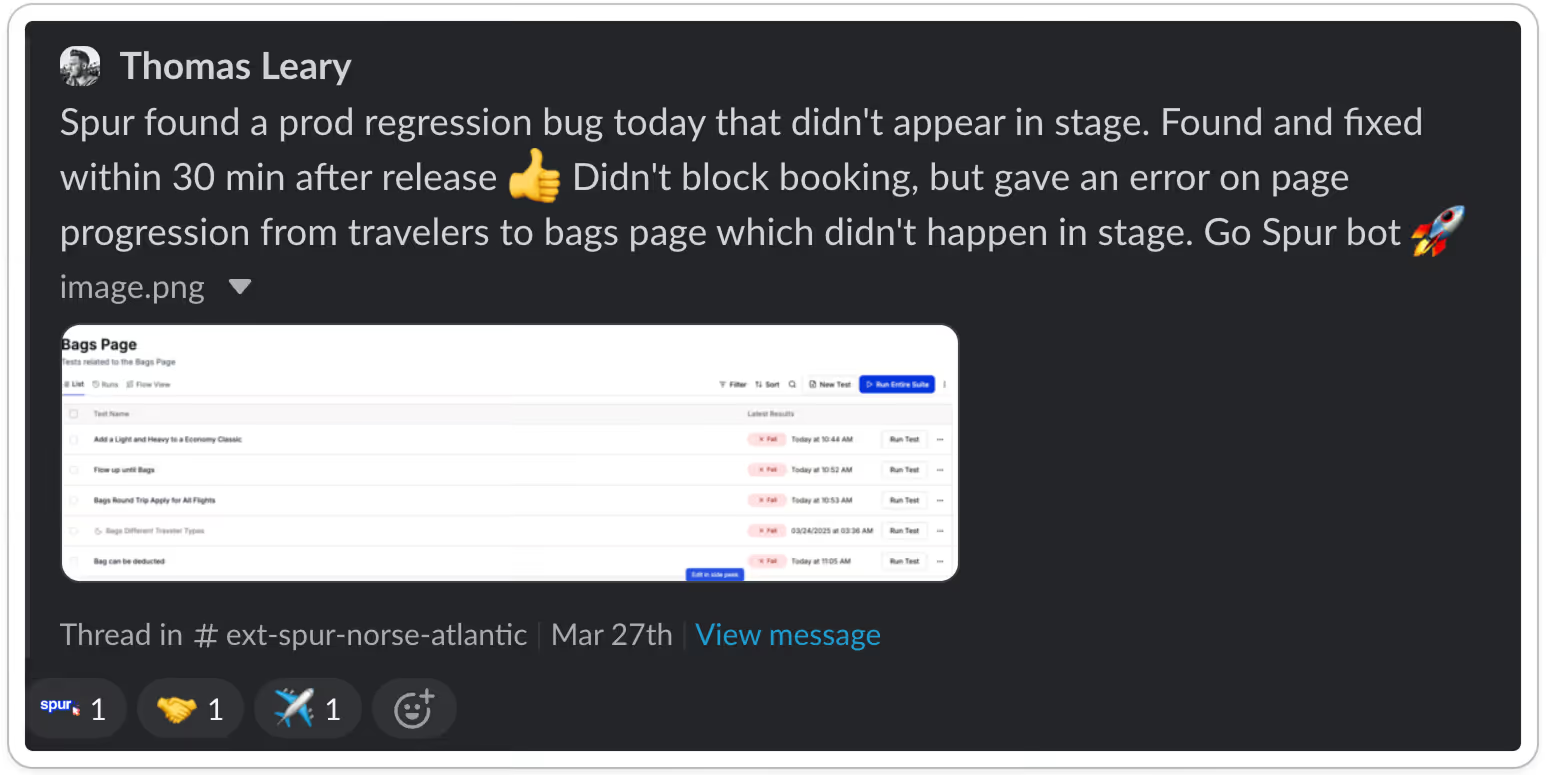

We’ve rolled this feature out to all customers for a few weeks now, and already we are seeing extremely powerful usages of these tools.

Use Case 1: Writing Tests

Early in our development of Spur, the idea of generating the tests themselves was an enticing one. But the problem was that AI generated tests were often aimless and would assume large jumps in the user flow. Since then, two big changes have occurred. The first is the jump in model capabilities. The difference in agents and models from even late 2025 to now has been the difference between a fantastic, even fun generation experience and a frustrating one. The second was developing the MCP to bring novel context to the agent. Some users use the MCP in their codebase, giving literal complete context to the test writing, while others look internally at their own tests, learning from past test runs to map out their product. Improving intelligence and context led to shockingly good tests.

A big focus for us at Spur was how we could develop this feature while keeping Enterprise level diligence. QA has long since trailed behind development in adopting AI features, as hallucinations are unacceptable in the last line of defense. Early adopters of the Spur MCP have shown us ways they use the MCP to bulletproof their QA.

We’ve given agents the ability to operate Spur, to create tests and run them. A beta user of this feature showed us his Skills – files that contain instructions for the agent. His create-tests Skill instructs the agent to first review existing tests, pulling the writing style before creating these tests. Agents controlling Spur made it possible to then continuously run and review the results, polishing them until they were production ready. This continuous polish allowed him to create Production-ready tests from one prompt.

We tell our customers to treat the Spur agent like a colleague new to your product. Now, users can treat the Spur agent like a senior colleague – one who’s learned the product inside and out.

Use Case 2: Complete Testing Loop in CICD

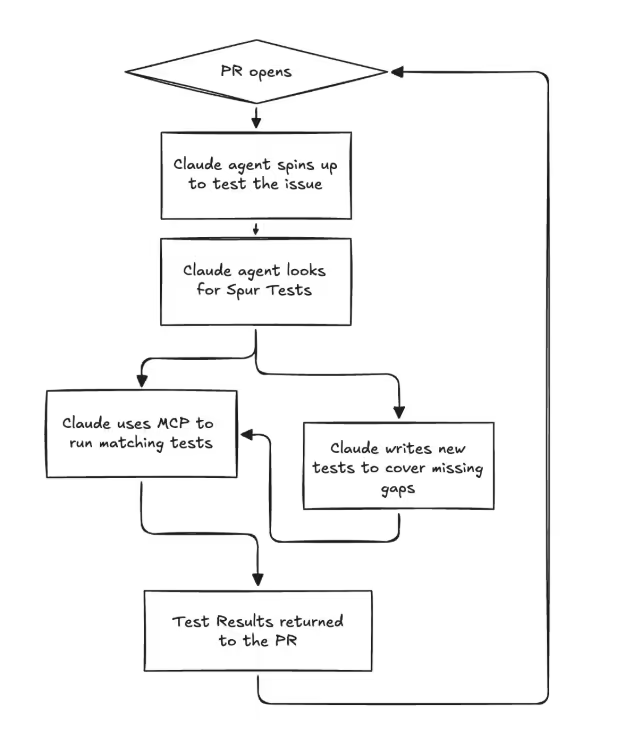

Our customers continue to inspire us. An engineering firm we work with showed us the workflow they’ve set up with MCP, which we very quickly folded into our own dogfooding process. A PR opens, and a testing agent is instantly spun up. The agent looks over available Spur tests, finds the ones that test the feature, writes new tests if those don’t exist, and fully tests the feature. For teams that are always finding themselves ahead of their testing (like us!) this changes everything.

Test coverage automatically grows with your application.

“The future will increasingly be built for agents” is a common statement nowadays. By making all possible operations on Spur accessible for agents, workflows such as the ability to choose, create, and run only relevant tests on feature push go from the realm of wishful thinking to production ready. At Spur, we are making QA built for agents.

What does a QA look like now?

Software developers have long felt the productivity benefits of AI, while often leaving QA behind as an afterthought. The Spur MCP has provided the 10x productivity jump so desperately needed to the QA team. Creating resilient, useful and valuable tests has never been easier, to the point where 100% test coverage can become the starting point rather than the distant goal. An important milestone when code is pushed faster and faster.

And yet, quality-thinking has never been more important. Spur can create, perform, and analyze tests, but knowledge and expertise is needed to identify where platforms are likely to break, and to direct Spur. The QA of today, using Spur, is no longer running through flows manually, nor writing brittle Playwright scripts, nor writing Spur tests by hand. They are directing and managing agents, to cover more application surface than entire teams could.

We are excited to release these use cases as Agent Skills for your team to use today. Book a demo here!

We are hiring across engineering and sales to build the 10x productivity boost to QA so necessary in the age of 10x developers. Come join us!

The Hidden QA Tax on Data Science Teams

Nobody budgets for tracking QA

Ask a data science team what slows them down and you'll hear the usual suspects: messy data, unclear requirements, stakeholder whiplash. Fair enough. But there's another time sink that rarely gets mentioned because it doesn't feel like "real work" — manually verifying that analytics events actually fire correctly.

Every deploy cycle, someone on the team opens Chrome DevTools, clicks through a user flow, eyeballs the Network tab, and checks whether the right events showed up with the right payloads. It's tedious. It's error-prone. And it eats way more hours than anyone wants to admit.

The problem with silent failures

Here's what makes tracking QA particularly painful: when it breaks, nothing visibly breaks. The site works fine. Users don't complain. But behind the scenes, events stop firing, required fields go missing, data types shift from strings to numbers, and third-party pixels get quietly dropped.

You don't find out until someone pulls a report two weeks later and the numbers look wrong. By then, the data gap is permanent. You can't backfill events that never fired.

We talk to data teams regularly and the pattern is consistent:

- About half of tracking issues are events that simply stopped firing after a code change

- Another 40% are missing or malformed fields in the payload

- The remaining 10% are subtler — wrong data types, casing changes, format drift

None of these throw errors. None of them show up in monitoring dashboards. They just silently corrupt your data.

What manual QA actually costs

Let's be honest about the math. A site with 30+ tracked events across multiple regions, browsers, and environments creates thousands of combinations to check. No one checks all of them. Teams spot-check the important flows and hope for the best.

That looks something like this every release cycle:

- Open DevTools on the target page

- Click through the flow (product view, add to cart, checkout)

- Search the Network tab for the right request

- Manually inspect each payload field

- Screenshot as evidence

- Cross-reference with whatever analytics platform you're using

- Repeat for every region and browser combination you have time for

- File a ticket if something looks off

Realistically, this takes 2-4 hours per validation cycle. And because it's manual, coverage sits around 30% at best. The other 70% is trust and luck.

Here's the part that stings: every hour spent in DevTools is an hour not spent on actual analysis. Data scientists didn't sign up to be QA engineers for tracking implementations. But someone has to do it, and it usually falls on the people who understand the data best.

Why this gets worse over time

Tracking implementations aren't static. New events get added. Existing events get modified. Third-party scripts update themselves without warning. Marketing asks for new UTM parameters. The consent management platform changes behavior.

Each change is a new surface area for breakage. And because the QA is manual, the gap between what's tested and what's deployed keeps growing. Teams that were keeping up six months ago are now drowning, and they can't always explain why — it just takes longer to validate everything.

Multi-region and multi-brand setups make this exponentially worse. An event that works fine on the US site might be broken on the UK site because of a locale-specific code path nobody thought to check.

What automated validation looks like

The fix isn't hiring more people to stare at DevTools. It's automating the validation itself.

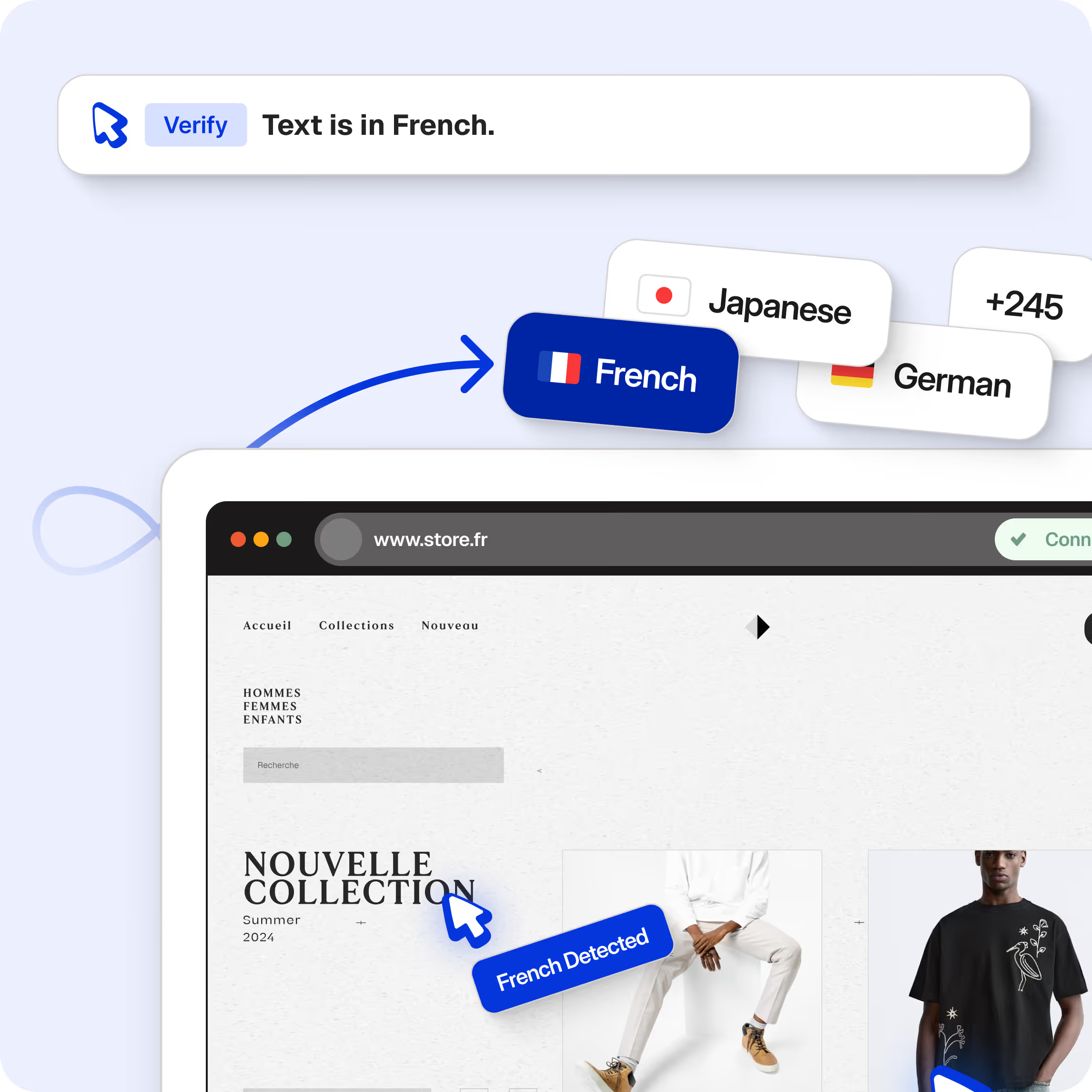

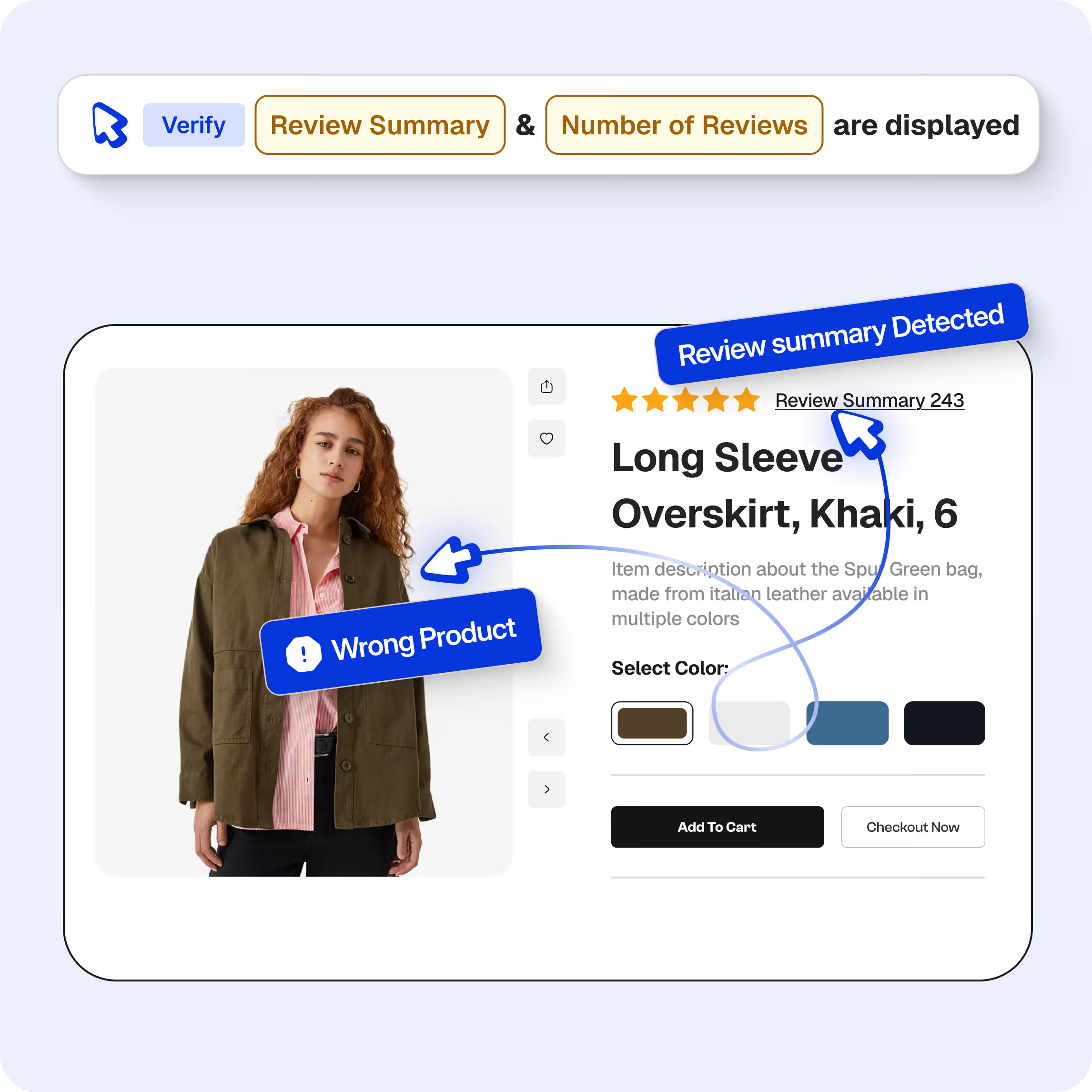

Spur replaces the manual DevTools workflow with an AI agent that runs a real browser, performs user flows exactly like a human would, captures all network traffic, and validates event payloads against your expectations. You describe what to check in plain language — "confirm the purchase event contains order_id, revenue as a number, and items as an array" — and the agent handles finding the request, parsing the payload, and reporting pass/fail with the actual data it found.

The same validation that takes a person 2-4 hours runs in under 10 minutes. Every field gets checked, every time. Across Chrome, Safari, and mobile. Across regions. In parallel.

You schedule it to run after every deploy, or daily, or both. When something breaks, you know within minutes — not two weeks later when a report looks wrong.

Where to start

If you're on a data team dealing with this, start with the one event that would cause the most damage if it broke. For most teams that's the purchase or order confirmation event — it's tied directly to revenue attribution and commission payouts.

Document what "correct" looks like: the event name, every required field, expected data types, format rules. Then automate that single check and schedule it to run on every deploy.

Once that's solid, expand to your next highest-priority event. Within a few weeks you'll have automated coverage over the flows that matter most, and your team can get back to the work they were actually hired to do.

The real cost isn't the hours

The hours matter, yes. But the bigger cost is what happens when broken tracking goes undetected. Bad data leads to bad dashboards, which leads to bad decisions. Attribution models trained on incomplete data misallocate budget. A/B tests with corrupted event data produce meaningless results.

Most data teams have experienced this at least once — the sinking feeling of realizing that a key metric has been wrong for weeks because an event silently stopped firing. That's the real tax. And it's entirely preventable.

Why Teams Are Moving Past Selenium

We all know what happens next

Someone ships a promo banner update. Checkout breaks on mobile Safari. A customer screenshots it on Twitter before Slack even lights up.

Every e-commerce team has this story. Most have it more than once.

The standard playbook is Selenium or Cypress. Write a test, pin it to a CSS selector, pray the selector survives the next sprint. It usually doesn't. A designer moves a button, the merchandising team swaps a carousel, and suddenly half your suite is red. Not because anything is actually broken. Because your tests are brittle.

Manual QA catches what automation misses, but it doesn't scale. You can't manually click through 400 checkout permutations before every deploy. So teams do what teams do: they ship and hope.

What is agentic QA?

Agentic QA replaces brittle test scripts with AI agents that interact with your site visually, the same way a real customer would.

Instead of telling a script "click the element with id=checkout-btn," you tell an AI agent "go buy something." The agent looks at the page, figures out where the checkout button is, and clicks it. When someone redesigns the page, the agent still finds the button. It doesn't care that the class name changed. It can see.

You write tests in plain English. Something like:

"Search for blue running shoes. Add the first result to cart. Apply coupon SAVE20. Go through checkout. Confirm the discount shows up."

That's the whole test. No page objects, no locator strategies, no framework boilerplate. If your site changes next week, the same test still works.

Why e-commerce teams need this most

Most SaaS apps have a relatively stable UI. You build a dashboard, it stays a dashboard. E-commerce is different.

Constant UI changes

Product pages change daily. Promos rotate. A/B tests shuffle layouts. Search results are personalized. The homepage during Black Friday looks nothing like the homepage in February. Selector-based tests can't handle this kind of churn. We've talked to teams where 60-70% of their automation effort goes to maintenance, not new test coverage.

Checkout bugs cost real money

A broken checkout flow isn't just a bug report. It's lost revenue, every minute it's live. Agentic QA tests the full purchase flow end-to-end on every deploy, across payment methods, currencies, and regions, without someone writing a separate script for each combination.

Seasonal pressure

You need the most testing coverage during Black Friday and holiday sales, which is exactly when your team has the least bandwidth to babysit flaky tests. Agentic tests scale without hiring contract QA or pulling engineers off feature work.

Multi-geography complexity

Selling globally means testing across currencies, languages, tax rules, and shipping options. AI agents can run these combinations in parallel without a separate test file for every locale.

What the results actually look like

We've been running agentic QA with e-commerce teams for a while now. Here's what consistently shows up:

- Flake rates drop to nearly zero. Selector-based suites typically hover around 80-90% pass rates because of environmental flakiness. Vision-based agents either see the right thing or they don't. Less ambiguity, fewer false failures.

- Test creation goes from days to minutes. Writing a Selenium test for a checkout flow can take a full day once you include setup, data seeding, and debugging. Describing the same flow in English takes about 10 minutes.

- 95% test coverage within the first month. Teams aren't spending weeks scripting. They're describing flows and shipping coverage fast.

- Maintenance mostly disappears. When the UI changes, the tests adapt. You're not rewriting locators every sprint.

How to get started with agentic QA

Nobody should rip out their existing test suite on day one. The smarter approach:

- Pick your highest-stakes flows. Checkout, account creation, search, product pages. The stuff that costs you money when it breaks.

- Run agentic tests alongside your current suite. Compare coverage and reliability side by side. See which approach catches more real bugs and which one breaks less often for fake reasons.

- Migrate gradually. Most teams we work with start moving over within a couple weeks once they see the side-by-side results.

You don't need to learn a new framework or hire automation engineers. If you can describe what your site should do, you can write agentic tests.

The future of e-commerce testing

E-commerce testing complexity is going up. Headless storefronts, AI-generated product content, hyper-personalization, multi-channel selling. The surface area keeps growing, and writing individual scripts for all of it isn't sustainable.

Agentic QA is still relatively early, but the direction is clear. Tests that can see and adapt will replace tests that rely on structural assumptions about your HTML. It's already happening.

If you want to try it, Spur can get your first tests running in about 10 minutes. No scripts, no framework setup. Just describe what your site should do and watch it run.

.avif)

Spur 2025 Feature Highlights

2025 was a big year for Spur. Here's a look at the features that changed how teams create and run automated tests using natural language, from smarter ways to model scenarios and organize suites to richer execution, debugging, and integrations that plug directly into the tools teams already use every day.

Scenario Tables

Scenario Tables help you create dynamic tests that handle multiple scenarios within a single user flow using parameterized test data.

Instead of maintaining many nearly identical tests with different inputs, you define one test and run it through multiple rows of data, which reduces redundancy, improves maintainability, and makes it easier to cover variations and edge cases.

Environments and Browsers

Spur lets you run the same test suites across multiple environments such as dev, staging, and production without duplicating suites.

By configuring Environments and Environment Values, you can centralize environment-specific settings and then run suites across deployments, making it easier to compare results and maintain consistent test logic.

Test Plans and Suites

A test suite in Spur is a collection of related tests that validate specific functionality, with Flow View giving you a visual representation of test dependencies.

When you run a test suite, Spur executes tests in order, respects dependencies, and provides real-time feedback on status, progress, errors, and execution time, which makes Test Plans and suites a foundation for organizing your testing around features and user journeys.

Bulk Actions and Retry-style workflows

Spur supports multiple test execution methods, including scheduled tests, cached tests, manual execution, and CI/CD, so you can repeatedly run suites and tests as part of your regular workflow.

Using features like scheduling, snoozed tests, and cached runs, teams can re-run tests and keep execution focused on the most relevant suites, which functions as a practical retry pattern for stabilizing and iterating on coverage over time.

Reporting, Debugging, and Integrations

The Spur Dashboard gives a centralized view of currently running tests, past runs, scheduled tests, and recent failures, making it easier to monitor results and understand your testing environment.

For deeper analysis and debugging, Spur provides Statistics, full browser observability, video replay, console and network logs, and agent logs, so you can see step-by-step what happened in a run.

Spur's integrations turn those results into action.

With Jira and Linear, you can create detailed tickets directly from failures with screenshots, logs, and reproduction steps, while Slack, Email, and GitHub integrations handle real-time notifications, reports, and automated workflows in your existing tooling.

Why Customer Success Is Baked Into Spur's Product DNA

The Early Days of Customer Success

Something we started doing from even before Spur was born was talking to customers and validating the problem. At Spur, we've internalized a simple truth: our customers' success is our success.

Why Invest So Heavily in CS?

At Spur, we believe quality is core to every digital experience. No matter the company, everyone who has a digital presence wants to provide a high-quality journey for their customers and users - and that starts with testing.

Spur is an AI-native platform powering that foundation. Our product runs every day, with every release, and directly impacts how companies perform. But our agentic, AI-driven approach to testing is a shift from the old model—and that means it requires deep customer understanding.

Customer Success is a Team Sport: Company-Wide Integration

We insist customer success is a team sport. We've woven customer-centric thinking into the DNA of every department.

.avif)

Everyone at Spur is deeply integrated in Customer Success. This diagram illustrates our holistic CS framework: the customer journey stages (onboarding, activation, expansion) are supported by Spur's services (blue circles on the left), measured by key metrics (green diamonds on the right), and fueled by a cross-functional team effort (bottom).

Engineering:

Our engineers don't hide behind feature backlogs and sprint boards, isolated from end-users. Instead, each engineer looks over 2–3 customer accounts. In practice, that means developers regularly sit in on customer calls and Spurring Sessions for the accounts they own.

Design:

Our design team is equally involved. Great UX in an AI-driven testing tool can be a differentiator, so our designers want to understand users deeply. They routinely analyze PostHog sessions and other analytics to see which features customers use and where they might get stuck.

Sales:

You might wonder, where does Sales fit after the deal is signed? At Spur, Sales doesn't throw the customer over the wall and disappear. Our sales team stays involved as a stakeholder in the customer's ongoing success. In fact, during handoff, the salesperson spends extensive time (often 3+ hours over multiple meetings) with the new customer.

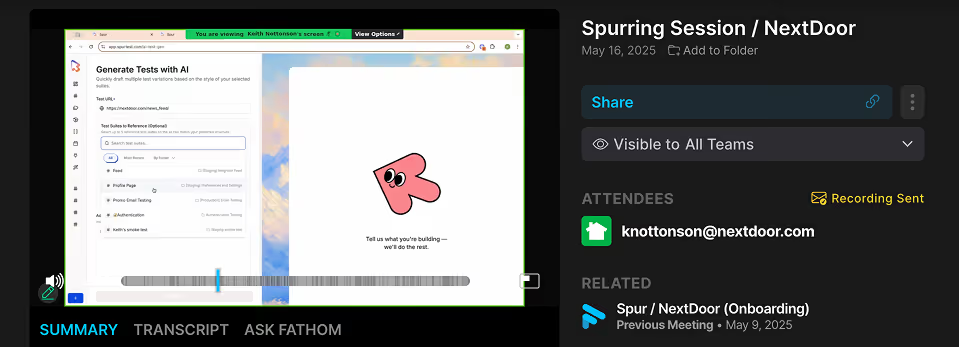

Spurring Sessions and the Power of Continuous Feedback

While Spur is a SaaS product, we offer customer support to all of our customers. Earlier we mentioned Spurring Sessions – a term you won't find in a generic CS handbook, because it's something we coined at Spur. Spurring Sessions are essentially high-touch, collaborative working sessions with our customers. Think of them as a blend between a coaching call, a consulting session, and a feedback forum, all rolled into one.

What makes Spurring Sessions particularly powerful is how they feed into our continuous feedback loop. Every session is an opportunity not just for the customer to learn from us, but for us to learn from the customer. For example, during a session, a customer might ask, "Can Spur do X?" If we hear questions like that repeatedly, it's a huge flag for us to improve UI or develop a new feature.

At Spur, customer success isn't just a department—it's a mindset. It shapes how we build, how we ship, and how we support. In an AI-first world, where technology evolves rapidly, the human feedback loop becomes even more essential. That's why we'll keep showing up—week after week, session after session—to ensure every customer gets more than a tool. They get a partner in quality.

AI, Tariffs & E-Com: A New Playbook for Profit

Agentic AI: The New Backbone of Resilient E-Commerce

Tariffs are climbing, uncertainty around global trade is at an all-time high, and online brands are facing immense pressure to cut costs without sacrificing quality or customer experience. Yet amid the uncertainty, a clear message is emerging from forward-thinking ecommerce brands: now's the time to innovate.

AI is pivotal in transforming e-commerce by enhancing operational efficiency, making it essential for businesses aiming to thrive in today's digital economy. - Brian Priest, CFO of eBay

One clear area of opportunity is in leveraging Agentic AI for your QA stack. QA is a high leverage space because it's work no engineer wants to be doing and, by freeing them up, they can devote their efforts to important tasks like new product development and innovation.

Maximize QA Impact on a Tighter Budget

QA testing has historically been a manual, resource-intensive process. With tariffs straining budgets, there's no room for inefficiency. AI agents automate repetitive test cases, catching critical errors early and freeing your team to focus on growth, not maintenance.

Protect your price-sensitive customers

With margins under threat, every bug becomes more costly. 89% of consumers say they'll abandon a brand after a negative digital experience—especially if they're feeling economic pressure. Agentic AI catches nuanced errors human testers might miss, protecting your reputation and revenue and boosting conversions.

Transform Core QA Processes with Agentic AI

1000+ tests in a month isn't just fast. It's what made bi-weekly releases and real test confidence possible. - Chloe Lu, E-Commerce Manager, LivingSpaces.com

Companies that adopt AI-driven testing see 50% faster test execution and 70% less test maintenance. These aren't marginal improvements—they're foundational changes. Agentic AI continuously adapts to site changes, UI updates, and user behavior, keeping your QA agile and responsive.

Cut real costs

With tariffs and tightening budgets, doing more with less isn't optional—it's essential. AI-powered QA helps teams expand test coverage, deploy confidently, and scale sustainably, all while reducing overhead.

Agentic AI isn't a luxury for calmer times; it's your strongest hedge against uncertainty today.

ROI with Spur

Spur's agentic AI helps e-commerce teams save time, cut costs, and deliver better digital experiences. Here's what our customers see:

- Up to 90% cost reduction in test case creation and maintenance

- 2–3× faster release cycles, powered by AI agents that execute and adapt in minutes

- 80–95% bug detection accuracy, even in edge cases manual QA typically misses

- >90% test coverage across critical revenue flows, with zero added headcount

- Less churn, more conversions: brands avoid the hidden cost of broken experiences

Ready to transform your testing?

Schedule a demo to see how Spur can handle all your QA, save development time and prevent costly bugs.